DISCUSSION

Availability of Resources & Reports

A fundamental difficulty with this study is that it relies on the toolkits, guidelines, reports and other documents publicly accessible from the organization websites. This introduces the very real possibility (indeed, likelihood) that these documents are not a representative sample of the work being done by these organizations. However, one could reasonably assume that the documents publicly accessible (and therefore included in this study) were selected to be posted online based on their quality, their relevance to current humanitarian crises, or their treatment of current issues in humanitarian aid, and are therefore worthy of consideration here.

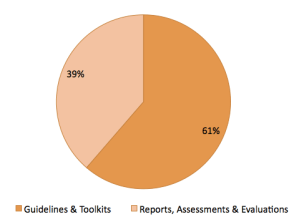

Two types of documents were sought for inclusion in this study: (see Chart 1)

- Documents such as guidelines or toolkits which outline prescribed practices to be used in the field when carrying out a particular stage of the program cycle; and

- reports, such as assessments, appraisals, or evaluations of actual aid responses or programs carried out by the organization.

Chart 1: Documents Reviewed by Type

Position papers, policy manuals, or best practices for action were not included unless they specifically described qualitative methodology. Only those documents specifically authored by the organizations studied were included. The UNICEF website, for example, includes a number of links to useful sources for methods and techniques, but the majority of them are linked to documents authored by outside entities and not UNICEF itself. Similarly, some of the documents found on the UN agency websites were only accessible to internal UN audiences, and were therefore not included in this study.

Position papers, policy manuals, or best practices for action were not included unless they specifically described qualitative methodology. Only those documents specifically authored by the organizations studied were included. The UNICEF website, for example, includes a number of links to useful sources for methods and techniques, but the majority of them are linked to documents authored by outside entities and not UNICEF itself. Similarly, some of the documents found on the UN agency websites were only accessible to internal UN audiences, and were therefore not included in this study.

Additionally, any document of either of the two types listed above that did not include specific qualitative methodology was not included; this eliminated a large number of documents from the study that were available on the websites but did not contain information this study was trying to address. For example, the documents on the MSF and Oxfam websites were largely reports which seemed to be designed for public consumption, reporting on monies spent and work completed, but did not include actual toolkits or program reports. Overall, no more than six documents from each of the organizations studied ended up being included, and both MSF and Oxfam do not currently have any documents in the study that met the above criteria.

INNOVATIVE PRACTICES

There were two innovative techniques encountered during this study that I was struck by and which merit additional discussion.

Method #1: Station Days, Catholic Relief Services

Station Days is a specific tool developed by Catholic Relief Services to include active participation of children in monitoring and evaluation efforts. Station Days, developed in 2003, is designed to make participation in M&E fun for children so that they are motivated to participate, which improves the quality of data received. The process is “designed to meet, satisfy and reinforce each of the key rationales for M&E activities that include child participation, namely creating child-friendly environments positively increases children’s cooperation and frees them to express themselves, resulting in higher quality and more reliable data.” (pg. 2)

Station Days is so named because of a series of six “stations” the children visit throughout the event:

- The Gate Station: This first station includes a sign-in for all participants. Staff complete a registration form for each child. Once registration forms are completed, staff give each child a ticket that contains the child’s name, date and a checklist of stations, which enables staff to monitor whether children pass through each station and identify any missed stations, additionally it is an important M&E control. Staff collect these tickets at the last station.

- The Clinic Station: A temporary clinic is set-up and managed by a nurse and/or community health worker. The data collected and services provided, depend on the context in which the specific project operates, as well as the standard health services available in the area. Generally, the clinic station focuses on collecting data about previous health problems, identifying and treating minor illnesses or injuries as well as issuing referrals to nearby health facilities for major illnesses or injuries.

- The Counseling Station: Social workers or counselors manage this station to assess children’s psychosocial and emotional well-being as well as to assess their lives at school and home. Staff running this station also provide counseling as needed by the child. The length and extent of this one on one counseling is dependent the specific needs of each child, and should last as long as necessary to meet the child’s needs. The project manager creates a schedule of topics for the life of the project, and assigns one topic area to each Station Day. These topic areas are prioritized appropriately to identify potentially dangerous or harmful situation as early as possible.

- The Meet Grandmother or Uncle Station: Some beneficiaries may live in child-headed households, where they lack parental support and guidance. The ‘Meet Grandmother or Uncle’ station provides OVC with the valuable experience interacting with their elders and provides opportunities to share their challenges and aspirations. Additionally, this station provides the opportunity to solicit the beneficiary perspective on project implementation. Community elders run this station, provide children preventive messages and basic life skills education. This station is organized as an informal discussion in which an elder(s) from the community older staff members who reside in the community work with the children to provide guidance and assess how their needs are being met by the project and what gaps remain.

- The Library or School Station: This station is meant to assess children’s academic performance and attendance. Because in-school and out-of-school children have unique challenges and needs, participants at this station are split into two groups of 8-10 participants, one group for in-school and one group for out-of-school. Staff should be sensitized not to stigmatize either group with large signs or other indicators. Staff should split the groups as discretely as possible. Children who attend school are instructed beforehand to bring their school exercise books and homework books to Station Days. Staff or volunteers can monitor daily school attendance through children’s schoolwork. Staff or volunteers can also review the students’ performance and identify areas that may need additional attention.

- The Gifts/Tokens Station: At this station participants’ tickets are collected. Staff carefully review each ticket to ensure there are signatures or initials beside each station. If a child has missed a station, staff assist the child in returning to the missed station. Participants receive a token or gift for their completed ticket. These tokens should be things that children need in their daily lives such as soap, toothpaste, clothes or books.

Source: CRS. Maj, M. et al. Guidance for Implementing Station Days: A Child-Centered Monitoring & Evaluation Tool. Catholic Relief Services. Baltimore, MD. 2009. Accessible here.

Method #2: & Videos for Qualitative Research

From the Save the Children blog: “Participatory Video (PV) is a set of techniques to involve a group or community in shaping and creating their own film. The idea behind this is that making a video is easy and accessible, and is a great way of bringing people together to explore issues, voice concerns or simply to be creative and tell stories. This process can be very empowering, enabling a group or community to take action to solve their own problems and also to communicate their needs and ideas to decision-makers and/or other groups and communities. As such, PV can be a highly effective tool to engage and mobilize marginalized people and to help them implement their own forms of sustainable development based on local needs.”

Source: STC. “Participatory Video: A Qualitative Method of Monitoring & Evaluation.” Design, Monitoring & Evaluation – Save the Children (blog). Posted 20 October 2009. Accessible here.

Two additional sources for photography as a participatory approach are:

- Segars, G. “Visible Rights Conference Sequels: A Participatory Photography Toolkit for Practitioners and Educators.” Cultural Agents Initiative. Spring 2007. Accessible here.

- Wang, C., Burris, M.A., & Ping, X.Y. “Chinese Village Women as Visual Anthropologists: A Participatory Approach.” Social Science & Medicine. Vol. 42, No. 10, Pp. 1391-1400. 1996. Accessible here.

Information on how to analyze content from videos can be found here: Spiers, J.A. “Tech Tips: Using Video Management/Analysis Technology in Qualitative Research.” International Journal of Qualitative Methods, Vol. 3, No. 1, April 2004. Accessible here.

QUALITY OF ESTABLISHED GUIDELINES & TOOLKITS

As mentioned in the findings, the toolkits & guidelines documents contained a wealth of information on participatory and other qualitative methods, including in most cases wonderfully detailed instructions on how to use these data-gathering techniques, in what situations they were appropriate to use, and how to properly train staff conducting the techniques.

One additional resource merits discussion here: The Good Enough Guide. It was developed by the Emergency Capacity Building Project (ECB), and is a collaborative effort between numerous humanitarian organizations, including CARE, Catholic Relief Services, the International Rescue Committee, Mercy Corps, Oxfam, Save the Children, and World Vision. This guide provides a cross-organizational look at accountability and measuring performance in emergencies, including tools for conducting interviews, focus groups, observation, surveys, and other valuable tools. The Good Enough guide is accessible here.

CONCLUSIONS

The question becomes, then, with the plethora of available guidelines and toolkits available, why does the evidence indicate such a mismatch between prescribed and utilized methods? The most commonly used method in the reports studied was key informant interviews; but why? I would imagine that interviews seem simpler to conduct, don’t appear to require specific training, and don’t require involving larger groups of people (such as the case for focus groups or many of the participatory methods) and involve persons who are easier to access.

We have seen that participatory methods are far and away the most prescribed methods for qualitative data collection, so why don’t we see them being used more? Is it because training on these participatory methods isn’t available for folks who are conducting research? Are the guides just released and “available” but staff members aren’t actually trained on how to use them? There must not be a mechanism for enforcing the use of a variety of techniques; there are best practices, but perhaps no penalties for not using them? Key informant interviews and a smattering of other techniques here and there seem to be “good enough”.

So what can & should be done in the future in order to ensure consistent, high quality qualitative information throughout the program cycle? There needs to be training for staff that is not only available but required for anyone engaging in qualitative research. In addition, for some stages of the program cycle there needs to be more emphasis placed on qualitative methods, as they can glean information not captured through quantitative means. Instead of simply providing guidelines, there should be requirements in place for each stage of the program cycle, to complete a cross-section of techniques and not rely so heavily on key informant interviews.

OUTSTANDING SOURCES FOR METHOD GUIDANCE

World Food Programme. Emergency Food Security Assessment Handbook, Second Edition. WFP. Rome, Italy. January 2009. Accessible here.

Barton, Tom (1998). Program Impact Evaluation Process, Module 2: M&E Toolbox. CARE Uganda. Accessible here.

IFRC. Handbook for Monitoring & Evaluation, 1st Edition. International Federation of Red Cross & Red Crescent Societies. Geneva, Switzerland. October 2002. Accessible here.

Rapid Assessment Procedures (RAP): Addressing the Perceived Needs of Internally Displaced Persons in Gulu District, Uganda. (World Vision, 14 September 2000). Accessible here.

IFRC. Vulnerability and Capacity Assessment Toolbox. International Federation of Red Cross and Red Crescent Societies. Geneva, Switzerland. October 1996. Accessible here.

UNHCR. “The UNHCR Tool for Participatory Assessment in Emergency Operations, First Edition.” United Nations High Commissioner for Refugees. Geneva, Switzerland. May 2006. Accessible here.

UNHCR. “Handbook for Planning and Implementing Development Assistance for Refugees (DAR) Programmes.” United Nations High Commissioner for Refugees. Geneva, Switzerland. January 2005. Accessible here.